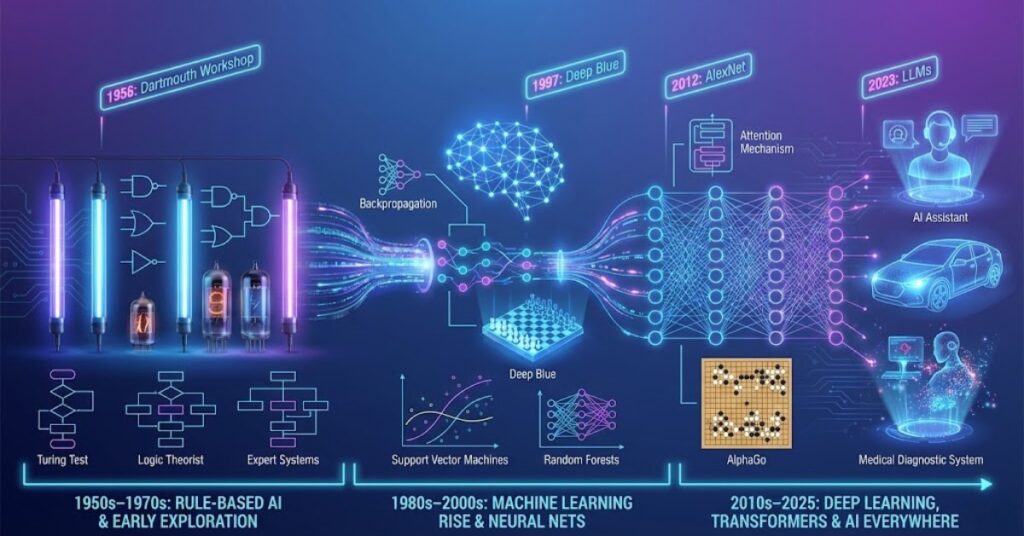

Artificial Intelligence today feels like the most advanced technology humanity has ever touched. Tools like ChatGPT, self-driving cars, image generators, and smart assistants appear almost magical to everyday users. But modern AI did not appear suddenly. It grew through decades of ideas, experiments, failures, breakthroughs, and technological leaps. The journey from the early 1950s to 2025 is a story of curiosity, ambition, and persistence. AI has changed direction many times, shifting from symbolic reasoning to machine learning, then to deep learning, and finally to generative AI capable of creating text, images, music, and even entire virtual worlds. This article walks through each major stage of AI’s evolution, explaining the key ideas and showing how they shaped the technology we use today.

1. The Birth of AI (1950s)

The story of AI begins long before computers became powerful. In the early 1950s, scientists started asking a simple but radical question – “Can machines think?” That question inspired the earliest theories of artificial intelligence.

Alan Turing and the First Big Idea

In 1950, British mathematician Alan Turing published a famous paper titled “Computing Machinery and Intelligence.” In it, he introduced the Turing Test, a way to measure whether a machine could imitate human conversation well enough to fool a person. The test didn’t create AI, but it gave the field a goal – build machines capable of intelligent behavior.

The First AI Programs

Primitive AI programs soon followed, running on extremely slow computers by today’s standards. Two early examples shaped the entire field,

- Logic Theorist (1956) – A program that could prove mathematical theorems.

- General Problem Solver (1957) – A system designed to solve logic problems using step-by-step reasoning.

These early programs helped launch a new research area. In 1956, the term “Artificial Intelligence” was officially coined at the Dartmouth Conference, marking the beginning of AI as a scientific field.

2. The Early Growth and High Expectations (1960s–1970s)

Once scientists realized that machines could follow logical rules and solve problems, excitement grew rapidly. AI researchers believed they might achieve human-level intelligence within a generation. But computers at the time were slow, had almost no memory, and used expensive hardware. Still, important progress was made.

Symbolic AI – Teaching Machines Through Rules

The dominant idea during this period was Symbolic AI, also known as GOFAI (Good Old-Fashioned AI). Here, the goal was to program machines with explicit human knowledge.

Systems were built using,

- if–then rules

- decision trees

- logical reasoning

- expert knowledge databases

The most famous examples were early expert systems, which tried to imitate human specialists by storing facts and rules.

Natural Language and Robotics Begin

The 1960s also saw early attempts at understanding language and controlling robots. ELIZA (1966) was one of the first chat programs, capable of mimicking a therapist using simple text patterns. Robots like Shakey (1969) could navigate rooms and make decisions based on basic sensor data.

While impressive for the time, these systems were fragile. They failed when problems became too complex or when the rules didn’t match real-life situations. This led to the first major slowdown in AI progress.

3. The AI Winter (Late 1970s–1980s)

By the mid-1970s, AI faced enormous difficulties. Funding was cut, research slowed, and public excitement faded. This period became known as the AI Winter — a time when progress stalled because expectations had been too high.

Why AI Struggled

Several challenges caused this winter,

- Computers were too weak to support advanced AI.

- Rule-based systems could not handle unpredictable real-world data.

- Early programs broke easily when problems changed slightly.

- Governments reduced funding, believing AI was a dead end.

Despite the slowdown, a few areas continued developing quietly, especially expert systems and basic neural network research.

4. The Rise of Machine Learning (1990s–2000s)

AI rebounded during the 1990s thanks to one major idea: instead of giving machines rules, let them learn from data. This new approach became known as machine learning (ML).

From Rules to Patterns

Machine learning allowed computers to improve by analyzing examples rather than memorizing instructions. Instead of telling a machine how to identify a cat, researchers fed it thousands of cat images and let it discover the patterns.

Machine learning enabled breakthroughs in,

- email spam detection

- early speech recognition

- handwriting recognition

- basic recommendation systems

This made AI far more practical than the old rule-based approach.

Key Milestones

Several important events defined this era,

- 1997 – IBM’s Deep Blue beats world chess champion Garry Kasparov

This showed that machines could outperform humans in specific tasks using computation power and algorithms. - 2000s – Big data and the internet explode

Large datasets allowed machine learning models to train more effectively. - Introduction of Support Vector Machines, Random Forests, and Gradient Boosting

These algorithms made ML accurate and reliable.

This era laid the foundation for what came next – deep learning.

5. Deep Learning Revolution (2010s)

If the 1990s gave AI a fresh start, the 2010s transformed it completely. The introduction of deep learning, combined with more powerful computers and large datasets, led to a wave of breakthroughs.

What Makes Deep Learning Different?

Deep learning uses neural networks with many layers, inspired loosely by the human brain. These networks can extract patterns automatically, even from complex data like images, sound, and natural language.

Deep learning excels at,

- image recognition

- voice assistants

- translation

- autonomous driving

- video analysis

Major Breakthroughs

Some of the defining achievements of this era include:

- 2012 – AlexNet wins ImageNet competition, proving deep learning’s power.

- 2016 – AlphaGo defeats world champion Lee Sedol, showing that AI could master complex strategy.

- Neural networks become essential for smartphones, cameras, and apps.

This decade changed AI from an academic dream into a real-world technology.

6. Generative AI and the Transformer Era (2020–2025)

The most dramatic transformation in AI came with the emergence of transformers, a new neural network architecture introduced in 2017. Transformers became the foundation of modern AI models like GPT, Claude, Gemini, and Llama.

Why Transformers Changed Everything

Transformers allow machines to understand long sentences, follow context, and generate human-like language. They can read articles, write essays, answer questions, and even create images or videos.

Their abilities include,

- summarizing long documents

- writing code

- generating high-quality images

- analysing data

- multilingual translation

- reasoning and problem solving

In short, they turned AI into a general-purpose assistant.

Explosion of Generative Tools

From 2020 to 2025, hundreds of generative AI tools emerged,

- text generators

- speech generators

- image and art models

- video creators

- music generators

- AI coding assistants

This period represents the fastest growth in AI history.

7. AI in 2025 – Where We Stand Today

By 2025, AI is deeply embedded in daily life. It powers apps, business systems, medicine, entertainment, and communication. But unlike the early days, AI is now capable of creativity and reasoning-like behavior.

Where AI Is Used in 2025

- healthcare diagnosis

- self-driving systems

- customer service automation

- fraud detection

- personalized education

- smart assistants

- robotics

- scientific research

AI is not just a tool anymore, it has become a digital partner.

Conclusion

The evolution of AI from the 1950s to 2025 is a journey of imagination, failure, reinvention, and breakthrough discoveries. What started as simple logic programs has grown into generative models capable of writing, reasoning, and assisting millions of people. Each era contributed something essential: symbolic reasoning built the foundation, machine learning unlocked pattern recognition, deep learning made perception possible, and transformers created the modern AI revolution. The future remains uncertain, but one thing is clear: the story of AI is far from over. As technology continues to advance, the next breakthrough may again transform our understanding of intelligence itself.