Artificial Intelligence models like ChatGPT feel almost magical. You type a question, and the system instantly replies with something that sounds human. But behind the scenes, the process is far from magic,it’s a combination of mathematics, massive amounts of data, and smart engineering.

This guide breaks the whole system down in a way a complete beginner can understand. You’ll learn how ChatGPT is trained, how it understands text, how it generates answers, and why it sometimes makes mistakes. The explanations mix paragraphs and point-style breakdowns so the ideas stay simple and enjoyable.

What Exactly Is a Model Like ChatGPT?

At a basic level, ChatGPT is a language prediction model.

Its main job is simple,

- You give it text.

- It predicts what text should come next.

This sounds tiny, but with enough training, these predictions become powerful enough to answer questions, write essays, translate languages, or talk like a human.

In everyday terms,

ChatGPT is like a superbrain built from patterns found in billions of sentences.

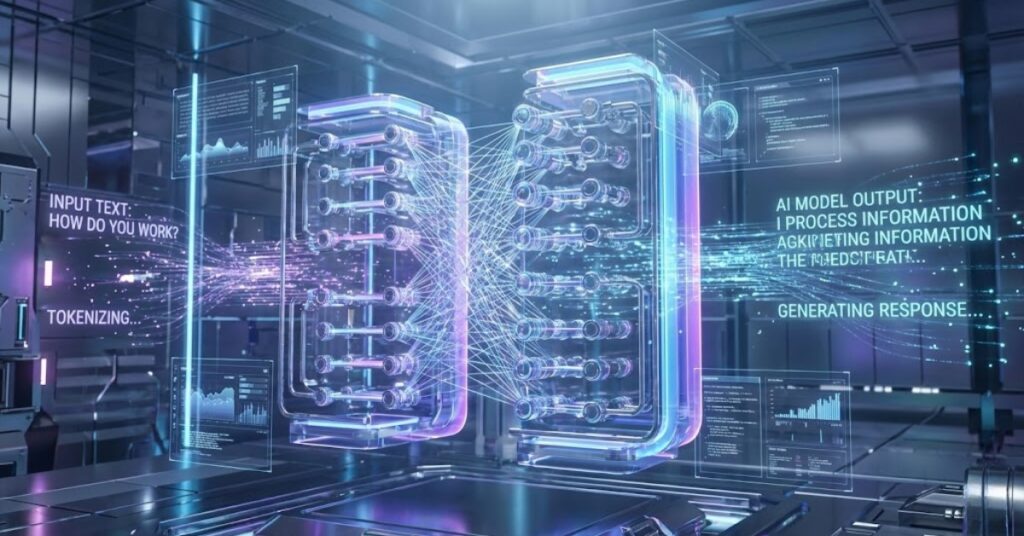

Understanding Neural Networks (The Brain Behind AI)

ChatGPT is built using artificial neural networks, which are loosely inspired by how your brain works.

A neural network is made of,

- Layers (input, hidden, output)

- Neurons (small units that process information)

- Connections (which carry signals forward)

Each neuron takes in numbers, transforms them, and sends new numbers forward, creating a chain of decisions.

Here’s a simple comparison,

- Your brain has neurons that fire signals to understand language.

- AI has artificial neurons that calculate numbers to predict language.

The idea is similar, but AI is simpler and purely mathematical.

What Makes ChatGPT Different? The Transformer Architecture

Earlier neural networks could not handle long sentences well. They forgot context quickly.

Then came Transformers, a breakthrough architecture introduced in 2017.

Transformers made models like ChatGPT possible by using a mechanism called self-attention.

This lets the model look at all words in a sentence at once and understand their relationships.

Example –

In the sentence,

“The cat sat on the mat because it was warm.”

The model needs to know what “it” refers to.

Self-attention helps the model connect,

- “it” → “the mat”

- “it” → “warm” context

This ability to track connections in long text is why ChatGPT can understand complex questions and conversations.

How ChatGPT Learns – The Training Process

Training ChatGPT is like teaching a child to read, except at a massive scale.

It happens in three main stages.

1. Pretraining – Learning From a Huge Amount of Text

OpenAI trains ChatGPT on a huge dataset containing books, articles, websites, and more.

During this stage, the model,

- learns grammar

- learns reasoning patterns

- learns world facts

- learns how humans write

- learns how conversations flow

But it doesn’t memorize exact pages.

Instead, it learns patterns.

This stage is unsupervised, meaning the model teaches itself through prediction:

Given a sentence, predict the next word.

Over billions of examples, it gets good at language itself.

2. Supervised Fine-Tuning – Humans Teach It Good Behavior

After pretraining, humans step in.

They provide examples like,

- “When a user asks this question, answer like this.”

- “If someone asks for help, respond politely.”

- “If a question is harmful, refuse it.”

This stage teaches the model,

- how to follow instructions

- how to stay safe

- how to respond in a helpful style

This is where the model becomes more like an assistant instead of just a prediction engine.

3. Reinforcement Learning – Improving Through Feedback

This step uses a technique called Reinforcement Learning from Human Feedback (RLHF).

Here’s how it works,

- Humans rank different answers.

- The model learns which kinds of answers humans prefer.

- It adjusts to produce more helpful, polite, accurate responses.

This makes ChatGPT feel more intuitive and natural when chatting.

How ChatGPT Understands Your Question

When you type a message, ChatGPT does not “read” it like a person.

Instead, your text gets turned into numbers.

This process involves tokens, tiny pieces of text.

A token may be:

- a word

- half a word

- a punctuation mark

Example –

“ChatGPT is smart” → might become [1034, 22, 8049] (just an example)

Once the text becomes numbers, the neural network processes it through its layers.

It does not understand meaning like humans do.

It detects patterns and relationships between tokens.

How It Generates an Answer

After understanding your message, the model generates a reply one piece at a time.

For each new word, it,

- Calculates probabilities for all possible next words.

- Selects the most likely or best-fitting one.

- Repeats the process until the answer is complete.

It’s similar to how your phone “predicts” your next word, but on a far more advanced level.

Why ChatGPT Sounds Human

ChatGPT sounds natural because,

- It has seen millions of conversations.

- It has learned writing styles from countless examples.

- It predicts text that resembles human speech.

But it does not actually,

- think

- feel

- have opinions

- understand meaning

What you experience is a highly advanced pattern-matching system.

Why ChatGPT Sometimes Makes Mistakes

Even powerful models fail sometimes.

Here’s why,

1. It Doesn’t Know Everything

It only knows the patterns in its training data.

If something is missing or outdated, it may guess incorrectly.

2. It Predicts, It Doesn’t Verify

ChatGPT generates the most likely answer, not necessarily the true one.

3. It Can’t Access the Internet Directly

Unless updated or given external tools, it cannot check real-time facts.

4. Ambiguous Questions Can Mislead It

If a question has multiple meanings, the model may choose the wrong interpretation.

Why ChatGPT Gives Different Answers to the Same Question

This is intentional.

AI models use something called temperature — a setting that controls creativity.

- Low temperature → factual, consistent

- High temperature → creative, varied

ChatGPT mixes predictability with variation so responses feel more natural.

Does ChatGPT Store Conversations?

No.

The model does not store or remember individual conversations unless you’re using features like memory (and even then, only small high-level preferences, not personal data).

Each message is processed independently, using only the conversation shown on screen.

Why the Model Feels Like It Understands You

This is an illusion created by,

- prediction

- context windows

- conversational training

The model keeps track of recent messages in your chat using a context window, which can handle thousands of words at once.

This lets it,

- continue the topic

- refer to earlier messages

- maintain style or tone

It feels like memory, but it’s not permanent memory, it’s temporary context.

The Future of Models Like ChatGPT

AI models will continue evolving in several ways,

- Longer memory windows

- Better reasoning ability

- Less hallucination

- More accurate real-world knowledge

- Integration with tools (search, calculators, apps)

Future versions will behave more like assistants that can help you work, learn, and create with fewer mistakes.

Final Thoughts

AI models like ChatGPT are powerful because they combine,

- massive training data

- mathematical pattern recognition

- advanced neural networks

- human feedback

They don’t think like humans, but they predict text so well that the results feel almost human.

This mix of paragraphs and point form should give you a clear, detailed understanding, without overwhelming technical jargon.